Bigtargetmedia.com – Artificial intelligence systems now crawl and analyze websites in ways that differ from traditional search engines. As generative AI tools grow more powerful, publishers must help these systems understand which parts of a website contain valuable knowledge. One emerging method involves using configuration files that guide AI crawlers. That is where Tips for optimizing llm.txt files for AI crawler access become increasingly important.

An llm.txt file works as a communication layer between your website and large language model crawlers. Similar to how robots.txt instructs search engines about crawl permissions, llm.txt can guide AI systems toward structured, high-value information on your website.

Think of it as a map designed specifically for AI readers. Instead of forcing the crawler to analyze thousands of pages blindly, the file highlights important resources and signals which content areas deserve attention.

Understanding Tips for optimizing llm.txt files for AI crawler access helps website owners improve discoverability within AI-powered search assistants, generative engines, and knowledge synthesis systems.

Understanding the Role of LLM.txt in AI Crawling

What an LLM.txt File Does for AI Systems

Before applying Tips for optimizing llm.txt files for AI crawler access, it helps to understand how AI crawlers operate.

Large language models rely on crawlers that scan websites for useful information. These crawlers collect textual data, identify knowledge patterns, and store structured insights that help the AI generate answers later.

Without guidance, crawlers may waste time scanning low-value sections of a website, such as outdated pages, navigation layers, or duplicated content.

An llm.txt file helps direct crawlers toward meaningful sections of the site. It can highlight documentation pages, educational resources, research articles, and knowledge hubs.

By guiding the crawler toward valuable content, the website increases the likelihood that AI systems recognize the site as a credible information source.

Why AI Crawlers Need Clear Content Signals

AI crawlers process websites differently from traditional search bots.

Search engine crawlers focus on indexing pages so they can appear in search results. AI crawlers aim to understand the information within those pages.

This difference means that clarity matters more than ever.

When websites communicate content structure clearly, AI systems can identify relevant knowledge more efficiently. Proper configuration files support that clarity.

This role explains why Tips for optimizing llm.txt files for AI crawler access now form part of modern AI-oriented SEO strategies.

Structuring an Effective LLM.txt File

Organizing Content Paths for AI Crawlers

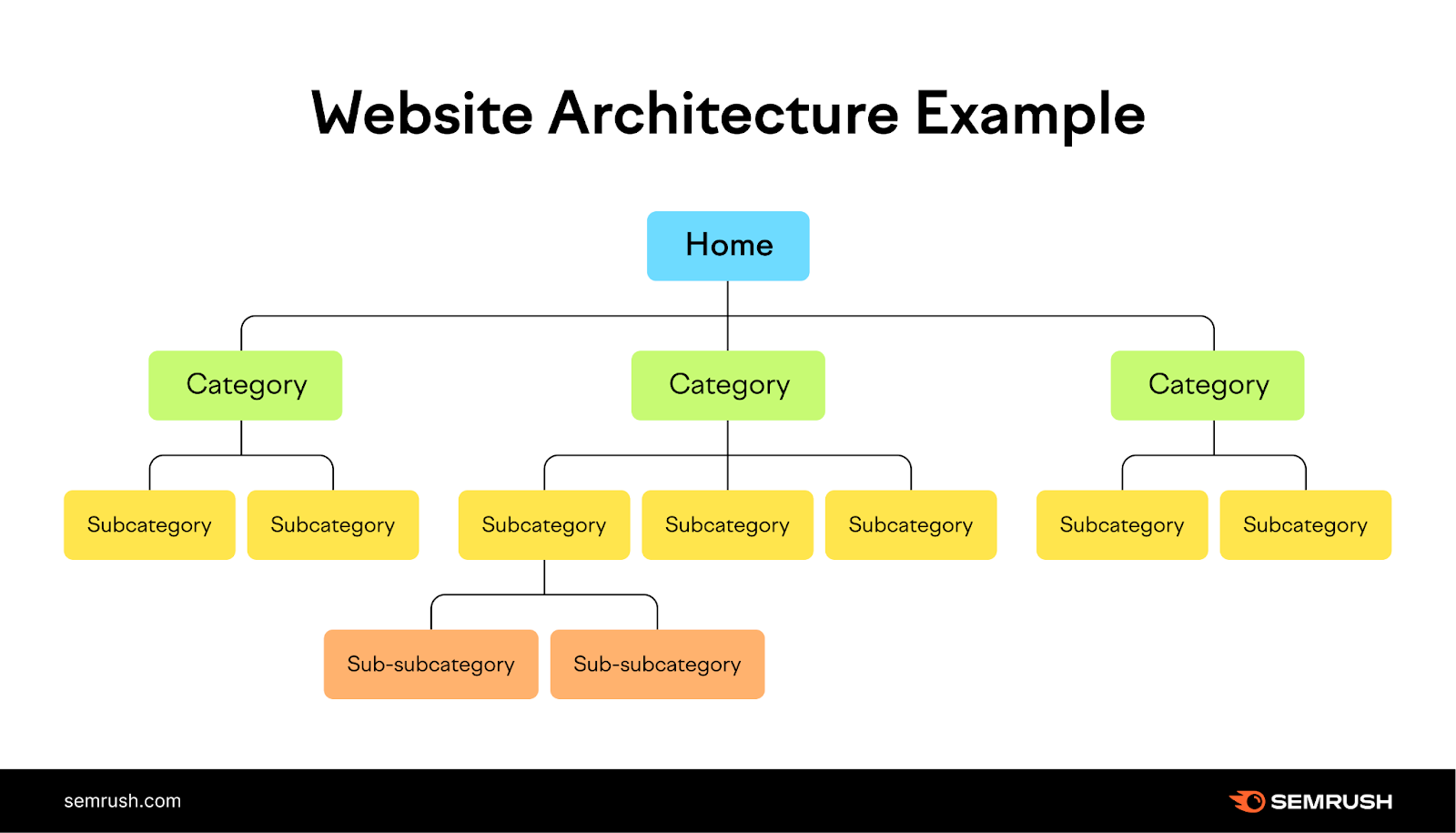

The structure of an llm.txt file determines how effectively AI crawlers interpret a website.

A well-organized file highlights sections of the site that contain knowledge-rich material. These may include guides, research pages, documentation, or educational resources.

When defining paths in the file, clarity becomes essential. Each path should point toward meaningful information that contributes to the knowledge of the broader topic of the site.

For example, a technology website may guide crawlers toward:

Knowledge articles

Technical tutorials

Research reports

Educational blog posts

This structure allows AI systems to focus their analysis on the most informative areas of the website.

Following these principles forms an important part of Tips for optimizing llm.txt files for AI crawler access.

Avoiding Content Noise

Many websites contain sections that provide little informational value. Navigation pages, duplicate content archives, or low-quality landing pages can distract crawlers from meaningful content.

When optimizing an llm.txt file, it helps to guide AI systems away from these areas.

Reducing content noise allows crawlers to concentrate on pages that communicate knowledge effectively.

In the same way a researcher ignores irrelevant sources, AI crawlers benefit from clear guidance that directs them toward authoritative information.

Aligning LLM.txt with Content Strategy

Highlighting Knowledge-Rich Pages

A strong content strategy strengthens the effectiveness of an llm.txt configuration.

Websites that produce educational resources, research insights, and in-depth guides provide valuable material for AI systems.

When optimizing the file, site owners should highlight pages that offer clear explanations and structured information.

AI systems favor content that teaches rather than promotes. Educational pages allow the model to extract meaningful insights that support user queries.

This alignment between content quality and crawler guidance forms a key component of Tips for Optimizing llm.txt Files for AI Crawler Access.

Connecting Content Topics Through Structure

Another important aspect involves connecting related topics across the website.

AI systems analyze relationships between concepts. When a website organizes content into logical topic clusters, crawlers gain a clearer understanding of the site’s knowledge structure.

For example, a digital marketing website might connect articles about:

SEO strategy

AI search optimization

Content marketing frameworks

Technical SEO improvements

This structured knowledge network improves how AI systems interpret the website’s expertise.

Technical Best Practices for LLM.txt Optimization

Writing Clear and Simple Instructions

Technical clarity plays an essential role when applying Tips for optimizing llm.txt files for AI crawler access.

Configuration files should remain simple and readable. Clear instructions allow crawlers to interpret the file without confusion.

Complex or ambiguous rules can create crawl inefficiencies. Instead of helping AI systems, overly complicated instructions may reduce the crawler’s ability to locate valuable pages.

A simple structure improves reliability and ensures that the crawler follows the intended guidance.

Keeping the File Updated

Websites evolve constantly. New pages appear, old content becomes outdated, and site architecture changes.

Because of this evolution, an llm.txt file requires regular updates.

If the file points toward outdated sections, crawlers may continue analyzing information that no longer represents the best knowledge available on the site.

Updating the configuration ensures that AI systems focus on the latest and most valuable content.

This maintenance process supports the long-term success of Tips for optimizing llm.txt files for AI crawler access.

Preparing Websites for AI Knowledge Extraction

Designing Content for AI Interpretation

Optimizing llm.txt files works best when the website itself communicates knowledge clearly.

AI systems prefer pages that present information logically. Articles should include clear explanations, organized headings, and structured narratives.

When the crawler reaches a page through the llm.txt guidance, it must still interpret the content effectively.

Well-structured articles help the AI identify key insights and contextual relationships.

Content clarity, therefore,e complements the technical configuration.

Encouraging Discoverability in AI Systems

AI-driven discovery depends on how easily systems can access and interpret information.

When websites guide crawlers toward valuable pages and provide clear content structures, the AI gains confidence in the site’s expertise.

Over time, this process can influence whether generative systems reference the website as a knowledge source.

This connection explains why Tips for optimizing llm.txt files for AI crawler access increasingly appear in discussions about modern AI-focused SEO.

The Future of AI Crawling and Website Optimization

The Expanding Role of AI Indexing

AI indexing continues evolving rapidly. As generative search tools grow more sophisticated, they rely on high-quality information sources to generate accurate responses.

Websites that prepare their infrastructure for AI crawlers gain an advantage in this environment.

Configuration files such as llm.txt provide a mechanism for communicating directly with AI systems.

This communication helps guide crawlers toward the most informative sections of a website.

Integrating AI Crawling into SEO Strategy

Traditional SEO practices still matter. Technical performance, content quality, and authority signals remain essential.

However, AI optimization introduces an additional layer that focuses on knowledge accessibility.

Website owners now consider how AI systems interpret and extract information.

Integrating Tips for optimizing llm.txt files for AI crawler access into SEO planning allows websites to adapt to this evolving ecosystem.

Conclusion

Understanding Tips for optimizing llm.txt files for AI crawler access helps websites prepare for the growing influence of generative AI systems in information discovery.

An effective llm.txt file guides AI crawlers toward valuable knowledge pages, reduces content noise, and improves the efficiency of AI indexing. When combined with strong content structure and clear site architecture, this configuration enhances how AI systems interpret a website’s expertise.

As AI-driven search and generative assistants continue expanding, applying Tips for optimizing llm.txt files for AI crawler access becomes an important step in ensuring that valuable website knowledge remains visible and accessible within the evolving AI information ecosystem.